FACS-grade facial expression analysis for research labs.

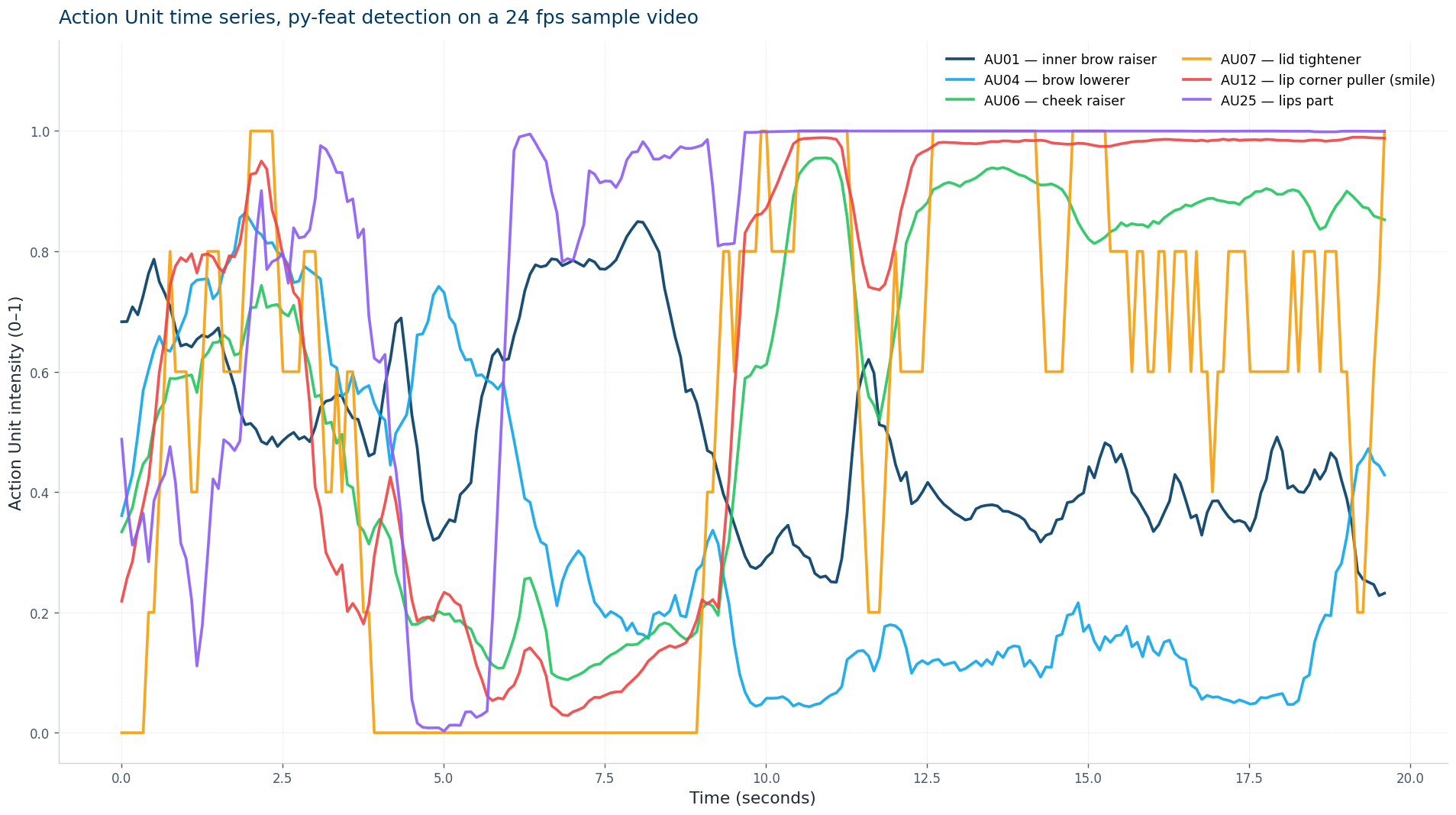

Local-first video processing, IRB-friendly by design. 20 Action Units, 7 emotions, valence and arousal, synced to a cloud dashboard for cross-lab work.

IRB-friendly local processing

Face video is analyzed on the lab machine. Raw footage never leaves your network.

20 FACS Action Units, 7 emotions

Per-frame Action Unit intensities, basic emotion probabilities, valence and arousal, exportable for SPSS or R.

Cloud dashboard for collaboration

Only derived numerical timeseries sync. Share results across labs without sharing video.

How the data flows

A small local agent processes recorded sessions on the lab machine. Action Unit and emotion timeseries sync to the ConductScience cloud dashboard for visualization, comparison, and export. Raw face video stays on-prem.

Built on py-feat (Cheong et al., 2023)

Action Unit and emotion classification use py-feat, a peer-reviewed open-source FACS toolbox validated on standard psychology datasets. We provide a downloadable methodology document covering model choices, validation, and recommended study protocols.

Cheong, J. H. et al. (2023). Py-Feat: Python Facial Expression Analysis Toolbox. Affective Science.

Psychology research

Quantify expressive behavior in studies of emotion regulation, social cognition, and developmental psychology.

UX research

Capture continuous emotional response during product walkthroughs without verbal interruption.

Market research

Measure reaction to creative, packaging, or product concepts with frame-level granularity.

Education research

Study classroom engagement and affect during learning interventions.

Request a demo

Academic license from $4,800 per year. Site licenses, hardware bundles, and clinical configurations available on request.

Be first in line

We are taking on a small set of pilot labs through the rest of the year. Join the waitlist for early access pricing and quarterly product updates.

Frequently asked questions

Where is the video processed?

On your lab machine. The local agent runs analysis offline. Only derived Action Unit and emotion timeseries are uploaded to the dashboard, and only if you opt in.

How does this compare to Noldus FaceReader?

ConductVision Face uses the open, peer-reviewed py-feat engine and adds a cloud dashboard for cross-lab collaboration. Pricing starts about half of comparable Noldus academic licensing.

What hardware do I need?

A modern lab workstation with a webcam or USB camera. Detailed hardware guidance is in the methodology document.

When does it ship?

Pilot rollout begins shortly. Waitlist members receive early access pricing.